Member-only story

What’s the Difference Between Attention and Self-attention in Transformer Models?

“Attention” is one of the key ideas in the transformer architecture. There are a lot of deep explanations elsewhere so here we’d like to share tips on what you can say during an interview setting.

What’s the difference between attention and self-attention in transformer models?

Here are some example answers for readers’ reference:

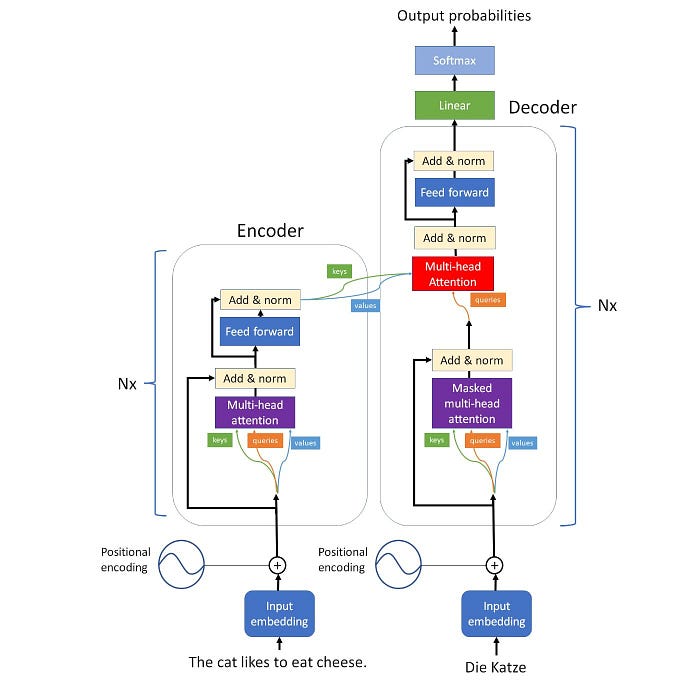

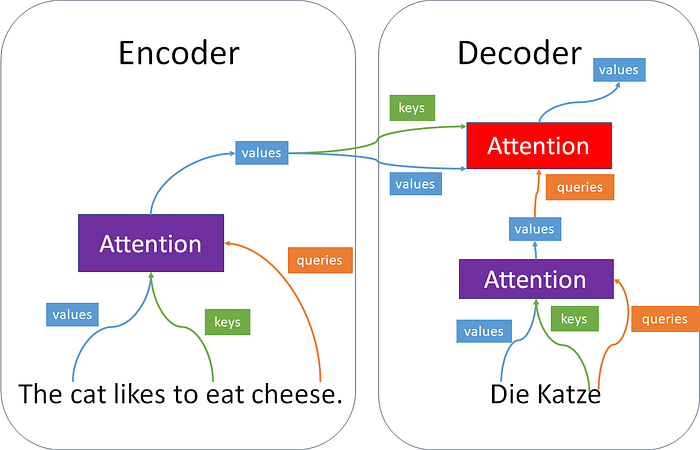

Attention connecting between the encoder and the decoder is called cross-attention since keys and values are generated by a different sequence than queries. If the keys, values, and queries are generated from the same sequence, then we call it self-attention. The attention mechanism allows output to focus attention on input when producing output while the self-attention model allows inputs to interact with each other.

Watch Cohere founder Dr.Aidan Gomez’s explanation and visual illustration:

Happy practicing!

Note: There are different angles to answer an interview question. The author of this newsletter does not try to find a reference that answers a question exhaustively. Rather, the author would like to share some quick insights and help the readers to think, practice and do further researches as necessary.

Source of video: CS25 I Stanford Seminar — Self Attention and Non-parametric transformers (NPTs). 2022.

Source of answers/images: Medium — Attention is all you need by Vincent Mueller. 2021. Geeksforgeeks. Self-attention in NLP. 2020.

Good reads: Modern Approaches in Natural Language Processing by Carolin Becker et al. 2020.