Member-only story

What is Global Attention in Deep Learning?

Global and local attentions are some of the early attention mechanisms that were inspirations to the famous transformer models today. There are a lot of deep explanations elsewhere so here I’d like to share tips on what you can say during an interview setting.

What is global attention?

Context: In their 2015 paper “Effective Approaches to Attention-based Neural Machine Translation,” Stanford NLP researchers Minh-Thang Luong, et al. propose an attention mechanism for the encoder-decoder model for machine translation called “global attention.”

Here are some example answers for readers’ reference:

Global Attention is one of the simplest attention mechanisms.

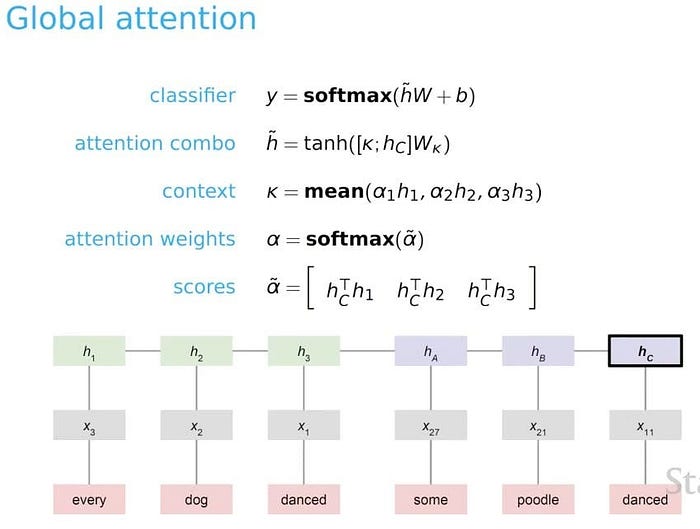

The idea of a global attentional model is to consider all the hidden states of the encoder when deriving the context vector k (below) . In this model type, a variable-length alignment vector 𝛼 tilde, whose size equals the number of time steps on the source side, is derived by comparing the current target hidden state hc with each source hidden state hi. The goal is then to derive a context vector k that captures relevant source-side information to help predict the current target word.) Given the alignment vector as weights, the context vector k is computed as the weighted average over all the source hidden state.

Watch the explanation by Professor Christopher Potts from Stanford (Tip: watch a bit longer for a more concrete numerical example!):

Happy practicing!

Thanks for reading my newsletter. You can follow me on Linkedin! The original post is here.

Note: There are different angles to answer an interview question. The author of this newsletter does not try to find a reference that answers a question exhaustively. Rather, the author would like to share some quick insights and help the readers to think, practice and do further research as necessary.

Source of video: Attention | Stanford CS224U Natural Language Understanding | Spring 2021 by by Professor Christopher Potts from Stanford

Source of images/answers: 2015 paper “Effective Approaches to Attention-based Neural Machine Translation,” Stanford NLP researchers Minh-Thang Luong, et al. wikipedia. Transformer (machine learning model)

Good reads: Two minutes NLP — Visualizing Global vs Local Attention by Fabio Chiusano Blog post. Gentle Introduction to Global Attention for Encoder-Decoder Recurrent Neural Networks by Jason Brownlee Blog post. Attention Mechanism in Neural Networks